Forecasting SEO performance means estimating future outcomes from historical data. But search behavior rarely follows stable or linear patterns.

Seasonal demand, anomalies, SERP changes, and measurement issues can all distort your data and lead to unreliable forecasts.

That makes forecasting more complex than running linear regression, exponential smoothing, or asking an LLM to project trends from historical performance.

Here’s how to account for seasonality, detect anomalies, and build more reliable SEO forecasts in Python using models designed for non-linear search data.

SEO forecasting pays the bills, but doesn’t add much value

Decision-makers rely on forecasts to justify investments and align expectations across digital teams. Stakeholders want forward-looking estimates, finance needs revenue projections, and roadmaps require a clear view of expected returns. However, the value of forecasting has diminished today.

AI Mode and AI Overviews created a major disconnect between clicks and impressions as LLM-driven scrapers increased bot activity and inflated impression data in reporting tools.

Additionally, Google reported a logging issue affecting Search Console impression data since May 2025. As a result, many forecasts end up serving as reassurance rather than guidance. They shield decision-makers from scrutiny while failing to reflect the business’s actual operating context.

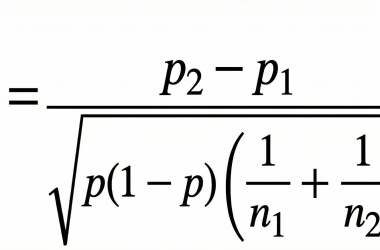

From a data analytics perspective, if search performance followed a normal distribution, you could rely on linear regression, exponential smoothing, or even a simple moving average (SMA) with confidence.

However, the average SEO forecast still relies on assumptions that don’t hold in organic search:

- Stable trends.

- Normal distributions.

- Consistent relationships between inputs and outputs.

| Technique | Description | When to use | When not to use |

| Linear regression | Fits a straight line through historical data to model long-term trends and project future performance. | When traffic or rankings show a consistent upward or downward trend with relatively low volatility. Useful for baseline forecasting and directional planning. | When data is highly volatile, seasonal, or affected by frequent algorithm updates, migrations, or campaign spikes. |

| Exponential smoothing | Applies weighted averages where recent data points have more influence than older ones. Can adapt to short-term changes. | When recent performance is more indicative of future outcomes, such as after site changes, migrations, or content updates. Useful for short-term forecasting. | When long-term trends matter more than recency, or when sharp anomalies may distort recent weighting. |

| Simple moving average (SMA) | Averages values over a fixed window to smooth noise and highlight underlying trends. | When you need to understand data direction, such as smoothing daily traffic for reporting. | When forecasting future performance because predictions rely on aggregated historical averages and may miss turning points. |

Today’s AI landscape forces a rethink of forecasting as search shifts toward highly volatile and probabilistic outcomes. In other words, today, a 10% increase in effort doesn’t translate into a proportional 10% increase in traffic.

Several structural factors are at play:

- Long-tail traffic distribution: A small number of pages typically generate most traffic, while most pages contribute very little.

- Binary user behavior: Many core SEO metrics, such as CTR, are driven by yes/no interactions (click versus no click) that diverge from normally distributed patterns.

- Zero-click search impact: High rankings don’t guarantee traffic — more queries are resolved directly in the SERP, inflating visibility without corresponding clicks.

If you have to forecast, do it properly. Baseline models still have a role:

- Linear regression for directional trends.

- Exponential smoothing for short-term adjustments.

- Moving averages for noise reduction.

There are ways to apply these techniques in Google Sheets. However, they should be treated as descriptive tools, not decision-making systems. To make forecasting useful, you need to move beyond them.

The SEO toolkit you know, plus the AI visibility data you need.

Why LLMs aren’t the answer to SEO forecasting

LLMs and MCP connections only compound the inefficiencies listed above. There are two structural problems with this approach.

They assume data behaves linearly

Pre-configured prompts or skills implicitly assume the data follows a linear distribution. This is misleading because SEO data is dominated by seasonality, cyclical demand, and structural breaks. Any system that treats it as smooth or continuous will systematically misrepresent future performance.

They optimize for plausibility, not statistical accuracy

LLMs aren’t forecasting models. They’re probabilistic text generation systems. They assign probability scores to predict token sequences based on patterns observed during training. They’re trained to reward your thinking, not challenge it.

As a result, they can produce confident but ungrounded outputs that lack the business and domain context required to interpret anomalies.

No matter how well engineered the prompt is, the system can still hallucinate – not because it’s “wrong,” but because it’s optimizing for linguistic plausibility, not statistical validity.

Forecasting requires explicit handling of seasonality, non-linearity, and critical interpretation of outputs. These analytical responsibilities can’t be abstracted away through prompting alone.

LLMs can assist with workflows, accelerate analysis, and even help operationalize models. But they can’t replace the role of an analyst in framing the problem, selecting the methodology, and validating the results.

How to do an SEO forecast that accounts for seasonal effects

Asking the right questions is often the hardest part of any analysis.

SEO forecasts are often requested by enterprise stakeholders or pushed by agencies during new business pitches. This typically makes forecasting more straightforward because the research question is already defined upfront.

Either way, the subject of the analysis is usually one of the following search indicators:

- Clicks (search demand).

- Impressions (search visibility).

- Rankings (position distribution).

- CTR (SERP behavior).

For this article, we’ll use Python to forecast synthetic clicks for a fictitious website influenced by seasonal demand.

Retrieving and preprocessing seasonal fluctuations

Based on the scope of analysis, gather historical data from Google Search Console through either the API or Google BigQuery.

While a larger dataset with broader historical coverage is technically better, it may not justify the query costs in BigQuery for an SEO forecast.

Carefully assess the tradeoff between cost, resources, time, and data sampling. You might find that using an API to retrieve as much historical data as possible (e.g., via Search Analytics for Sheets) does the job.

Set up a Google Colab notebook, install the required dependencies, load your dataset with date and clicks as columns, and convert the date column into a datetime index.

Enforce daily frequency to ensure consistency across dates, and quickly fill any missing data gaps using interpolation.

#data viz |

Does it look like a linear distribution, or can you already spot anomalies?

Data preprocessing involves standardizing and cleaning your dataset to reduce the impact of outliers on your next forecast. This step is often overlooked, yet it’s critical for improving model reliability.

To prove this, we need to assess stationarity, i.e., whether the relevant measures of central tendency, namely the mean and variance, remain stable over time.

result = adfuller(df['clicks'].dropna()) |

For context, the smaller the p-value (<0.05), the more confident you can be that patterns in the time series aren’t random.

ADF Statistic: -3.014113904399305p-value: 0.06246422059834887 |

The p-value isn’t convincing here, meaning the series isn’t stationary (linear), and seasonality likely plays a role.

As discussed, assuming SEO data is stationary (i.e., follows a linear distribution) is a flawed heuristic.

SEO data often follows non-linear trends, so relying on simple methods that assume stable data can lead to poor forecasts. Instead, you should decompose the time series and model seasonality.

Seasonality decomposition helps separate true performance trends from recurring patterns such as weekly or monthly cycles.

To do this, we need to zoom in on granular weekly search patterns.

#If data recorded daily, and you want to analyse weekly seasonality (period=7) |

The trend plot itself is already suggestive:

- Search interest (clicks) is trending downward.

- Search interest is likely affected by weekly sales cycles – look at the numerous small peaks.

- Search interest likely follows seasonal demand – it ebbs and flows at certain times of year.

However, the residuals plot contains clusters of large spikes, both positive and negative, reaching up to 500,000. These represent anomalies, or outliers, that appear connected to the trend’s inflection points.

This means the model made a “mistake” when decomposing the trend line because it didn’t fully capture sudden spikes.

Handling seasonality with SEO forecast

To decompose and isolate seasonality, you can use several models depending on the level of complexity and flexibility you need:

| Model | Description |

| STL decomposition | A robust technique for separating a time series into trend, seasonality, and residuals. Ideal for revealing the underlying structure in data where patterns vary over time, making it useful for anomaly detection. |

| SARIMAX | ARIMA extended to seasonal data. A statistical model that handles non-stationary data, seasonal patterns, and external independent variables such as algorithm updates. |

| Prophet | Built by Meta for real-world data, it handles multiple seasonalities, missing data, and abrupt shifts. Leveraging additive models, it’s particularly suited for time series with strong seasonal patterns. |

| BSTS | A Bayesian model that captures trend and seasonality while incorporating uncertainty. BSTS is commonly used for counterfactual estimation in causal impact analysis (“what would have happened if X never occurred?”), making it suitable for testing applications such as pre- versus post-analysis. Useful if you want to learn R. |

For this article, we’re going to use STL decomposition for anomaly detection in a “wobbling” (non-stationary) time series.

# Fit STL decomposition (period=7 for weekly cycle) |

The red points are extreme values that aren’t explained by either trend or seasonality. However, detecting anomalies isn’t the same as removing them.

In non-stationary time series, variability changes over time (e.g., seasonality, trends, algorithm updates). Removing outliers outright breaks the time index and introduces artificial gaps that bias the actual seasonal impact.

A more robust approach is to replace anomalies with expected values.

df['trend'] = result.trend |

Because this approach preserves the time series rows, the forecasting baseline is now protected from bias and artificial gaps. You can validate this by applying STL decomposition to the cleaned time series.

result_clean = seasonal_decompose(df['clean_clicks'], model='additive', period=7) |

What finally stands out is that once a week (every seven observations), there’s a spike. This suggests peak search demand on Saturday or Sunday, indicating stable and consistent interest patterns.

A few scattered residuals, or anomalies, remain, but they’re rare and random, showing no clustering or drift. This confirms that outlier handling has been effective and the model fit is robust.

At this stage, the time series decomposition is clean enough and ready for forecasting.

Plotting a non-stationary SEO forecast

While you could experiment with SARIMAX or BSTS, this synthetic SEO forecast uses Prophet because it’s well-suited for handling time series with strong seasonality.

Using our anomaly-free dataset with a preserved time index, Prophet can forecast click performance over the next 90 days. To add more context, you can introduce a regressor to flag external factors such as Google core updates or measurement issues.

In this example, you can apply a flag to account for the Google Search Console logging issue that artificially inflated impressions between May 2025 and April 2026.

The code below generates a 90-day forecast and outputs a line chart, with the option to export the forecast as an .xlsx table.

Note that the lower and upper bounds represent the confidence interval, indicating the range within which clicks are expected to fall over the forecast horizon.

prophet_df = df[['clean_clicks']].reset_index() |

Track, optimize, and win in Google and AI search from one platform.

SEO forecasting isn’t usually linear

SEO forecasting isn’t about projecting neat, linear trends – it’s about understanding messy, non-stationary data shaped by seasonality, anomalies, and external shocks.

By cleaning data properly, modeling seasonality, and accounting for real-world distortions such as SERP changes and tracking issues, forecasts become less about false certainty and more about informed direction.

While the goal isn’t perfect accuracy, a robust approach to forecasting non-stationary time series is essential for framing stakeholder expectations within a realistic range and making better decisions.