With the growing AI and Large Language Models, there is a higher demand for people wanting to get answers from their data sources. Prompting them to integrate various methods to search and retrieve data from different sources and passing them to these AI Models has become critical.

Retrieval Augmented Generation, or RAG, is a potential solution for this.

If you’re eager to discover how RAG can transform the way you access and utilize your data, read on.

AI and Generating Human-Like Responses. 🙋♂️

Tracing back to 2013, the AI landscape was vastly different. The buzz was about simple neural networks, the foundational building blocks that paved the way for future advancements. People were happy if they could quickly train and get good scores on MNIST.

Later, we got deep neural networks capable of performing tasks in areas like image and speech recognition, outperforming traditional algorithms.

But the real change came in November 2022. When OpenAI launched ChatGPT. It took the world by storm. Amassing 100 Million+ users in just 2 months, this has been the most significant moment in internet and AI history.

Not just because it can perform multiple tasks but because its capability surprised many people. And after that, large language models have taken the world by storm. We’re seeing the rise of AI startups and enterprises developing their own large language models.

And now it comes to the question. Can anyone use their own data, pass it to the LLM, and get it to generate insights on the fly?

But before this, let’s discuss the current challenges with AI Large Language Models.

Limitations of AI Large Language Models 🔚

While Large Language Models are doing a really great job. Relying on them is more challenging. Consider this headline:

ChatGPT really took this person’s job.

If you are a regular user of LLMs or tried to test to their limits. You must have noticed a severe problem: Hallucination. It’s when any large language model starts generating answers and things based on its creativity, whether it exists or not. In the above example, ChatGPT provided reference cases that didn’t exist.

This stops enterprises, hospitals, and other businesses from immediately introducing AI Models into their day-to-day cases. However, hallucination isn’t the only problem faced by State-of-the-art Large Language Models. There’s more. And here’s a list of them 👇

- Context Windows: There is a training cutoff after which the training is stopped. Therefore, it restricts the AI models from producing anything after that date.

- Outdated Training Data: Data is dynamic, facts are static. So, to keep up with the ongoing changes. We need to retrain the AI Model, which brings the third problem.

- Cost: AI Models don’t sit on hard drives. They take up RAM, CPU, and GPU to run and train. These are costly; the hardware expense of retraining the large language model can quickly skyrocket.

- Accuracy and Bias: LLMs are prone to errors due to biases in the training data, lack of common-sense reasoning, and the model’s way of being trained. This depends on the team of engineers as well.

- LLM Hacking and Hijacking: Recent research has started to show that LLMs can be hacked and made to reveal potentially dangerous information.

Like any other software, these AI Models aren’t 100% perfect and continue to thrive to be better. Now let’s understand what RAG is and why it’s important.

Retrieval Augmented Generation aka RAG ✨

Retrieval Augmented Generation (RAG) is a recent advancement in Artificial Intelligence. It’s a form of Open Domain Question Answering with a Retriever and a Generative AI Model. It combines a search system with AI models like ChatGPT, Llama2, etc. With RAG, the system searches a vast knowledge base for up-to-date data and articles. This data is then used by the AI to give precise answers. This method helps reduce errors in AI responses and offers more customized solutions.

So, with RAG, the retriever or searcher can access the latest data, sources, and other important articles from a very large knowledge base. And then it provides it as input to the Generative AI Model. Hence the name Retriever Augmented Generation. This approach allows the Large Language Model to tap into a vast knowledge base and provide relevant and to-the-point information.

This significantly improves the problem of hallucination faced by large language models. And can provide tailored answers for you.

Why Use Retrieval Augmented Generation? Aren’t Current AI Models Enough?

Current Generation AI Models have a cutoff period after which they stop training. Due to that, I asked for events that happened after that. Attempting to retrieve recent information can be a challenge.

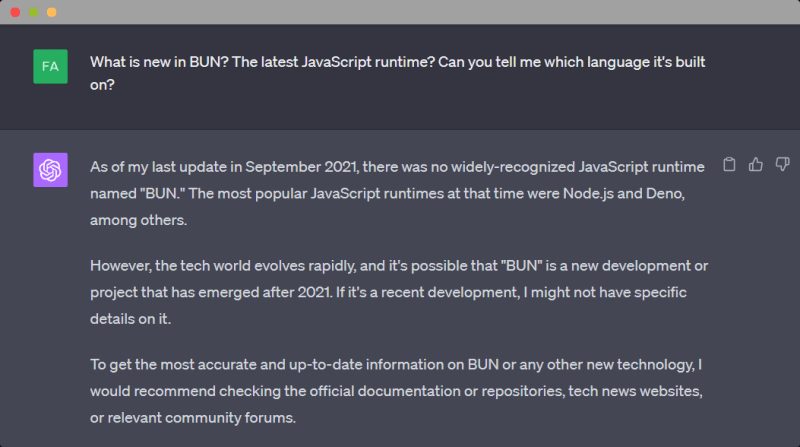

Take a look at this example. Asking ChatGPT about BUN

ChatGPT Recommends:

I would recommend checking the official documentation or repositories, tech news websites, or relevant community forums.

Can we not send the text of these documents, repositories, websites, and community forums directly into ChatGPT and get the relevant answers? And RAG helps here.

And not just about BUN. But what if, in the same manner, you wanted to query your company’s data and know insights and answers relevant to your own data without making it public.

And this is where Retrieval Augmented Generation shines and provides answers with sources. And, while on the question of connecting multiple data sources. Swirl can help you solve the problem quickly.

- Connect to various data sources.

- Swirl can perform query processing via ChatGPT.

- Re-ranking and getting the top-N answers via spaCy’s Large Language Model. (Cosine Relevancy Processor)

We understand that for enterprise customers, Time is of the essence.

Metapipe (Enterprise) allows for a fast RAG pipeline setup for enterprise customers, providing quick answers. If you want to learn more about how Metapipe (Enterprise) can benefit your organization, don’t hesitate to contact the Swirl Team for further information.

RAG vs. Fine-Tuning

What is Fine Tuning?

Fine-tuning a Large Language Model means retraining any large language model on a dataset and making it really good for a subtask. People have fine tune LLaMa2 LLM for various tasks like writing SQL, Python Code, etc. ref

While this is good for tasks with massive and static data like Python syntax, SQL, etc… The problem comes when you want to train it on something new or when no large dataset is available.

If the dataset keeps changing, you must retrain the model to keep up with the changes. And this is expensive.

Consider coding it on documentation of BUN, Astro, Swirl, or your company’s documents. Also, note fine-tuning makes it good at a specific task. It may be that you won’t be able to access the source or get the relevant citations for that source.

Can you do fine-tuning?

Answer these questions:

- Do you have the engineers and hardware required for training a Large Language Model?

- Do you have the data necessary to get good answers from the Large Language Model?

- Do you have Time?

If the answer to any of these three questions is “no.” Then, you need to reconsider fine-tuning. And opt-in for a better and more accessible alternative.

RAG Fits the scenarios where Fine Tuning Doesn’t.

- Small documentations.

- Articles, research papers, blogs.

- Newly created code bases, etc.

Generating answers from them is easier than you think. While there are many options with which you can create a RAG Pipeline. But Swirl makes both the parts, Retrieval and Generation, easier.

Swirl can search and provide the top-N best answers from the search query and software models. Check our GitHub.

But, if you are an enterprise customer, Swirl’s Metapipe can provide a much faster way to generate answers. The latter is for enterprise customers, while the former remains open source.

Contribute to Swirl 🌌

Swirl is an open-source library in Python 🐍. And we’re looking for people to help build this software. Looking for fantastic people who can:

- Create excellent articles, enhance our readme, UI, etc.

- Contribute by adding a connector or search provider.

- Join our community on Slack.

It would mean a lot if you could give us a 🌟 on GitHub. Keeps the team motivated. 🔥

swirlai

/

swirl-search

Swirl queries anything with an API then uses Large Language Models to re-rank the unified results without copying any data! Includes zero-code configs for Apache Solr, ChatGPT, Elastic Search, AWS OpenSearch, PostgreSQL, Google BigQuery, Generic HTTP/S, Google PSE, NLResearch.com, Miro, Microsoft 365, HubSpot, Atlassian, YouTrack, GitHub & more!

Swirl

Swirl 🌌 is an open source search platform to seamlessly connect databases, warehouses, search providers, and data siloes. Dive deep, unveil hidden insights, and navigate your data effortlessly. Whether you’re a startup or a large enterprise, Swirl is tailored for you.

Use Swirl to search within your data 🔍. Swirl connects with Large Language Models GPT to provide insights and answers from your own data source. Enabling you to perform Retrieval Augmented Generation (RAG) on your own data.

Swirl is built in Python along with Django. Swirl is intended for use by anyone who wants to solve multi-silo search problems without moving, re-indexing or re-permissioning sensitive information.

How Swirl Works

Swirl adapts and distributes user queries to anything with a search…